Last time I wrote about building a proxy for explosive carrying, replicating the work of Gradient Sport. This time I applied the same acceleration logic to my Dribbling from a Standstill proxy — and then tested which method actually works better.

The two approaches

The simple method (my original) asks a blunt question: was the player basically standing still before the take-on, yes or no? It uses a hard threshold on the previous action's speed and displacement — if both are below a cutoff, the take-on counts as "from standstill."

The complex method tries to be smarter. Instead of a binary yes/no, it builds a confidence score from five different signals: how fast the player was moving just before the take-on, the physical displacement from the previous action's end point, whether the previous action type implies movement (a receival vs. a dribble), and two rolling averages over the last three actions for speed and displacement. Each signal gets a weight, and if the combined score exceeds 0.75, the take-on counts as "from standstill." It also uses possession-aware groupings, so it never accidentally looks at the previous action from a different possession or team.

On paper, the complex approach sounds better. It accounts for more context, handles edge cases, and doesn't rely on arbitrary cutoffs. But sounding better and being better are different things — and in analytics, the way you test that is by checking which metric is more repeatable.

The first red flag

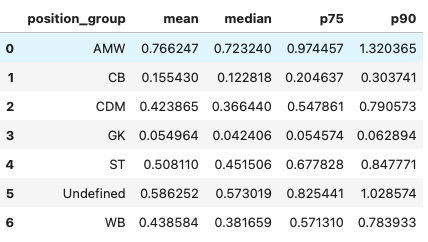

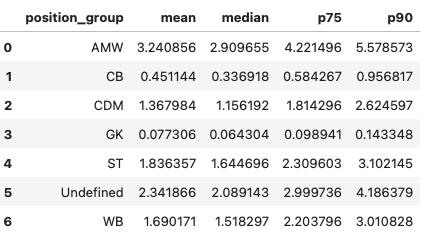

After calculating the volume of standstill take-ons normalised per 30 minutes in possession, I noticed a big decrease in the number flagged by the new method. More importantly, attacking midfielders and wingers had a similar amount of standstill dribbling per 30 minutes in possession as other positions:

This doesn't seem plausible, given how different the all type of take-on per 98 are across positions:

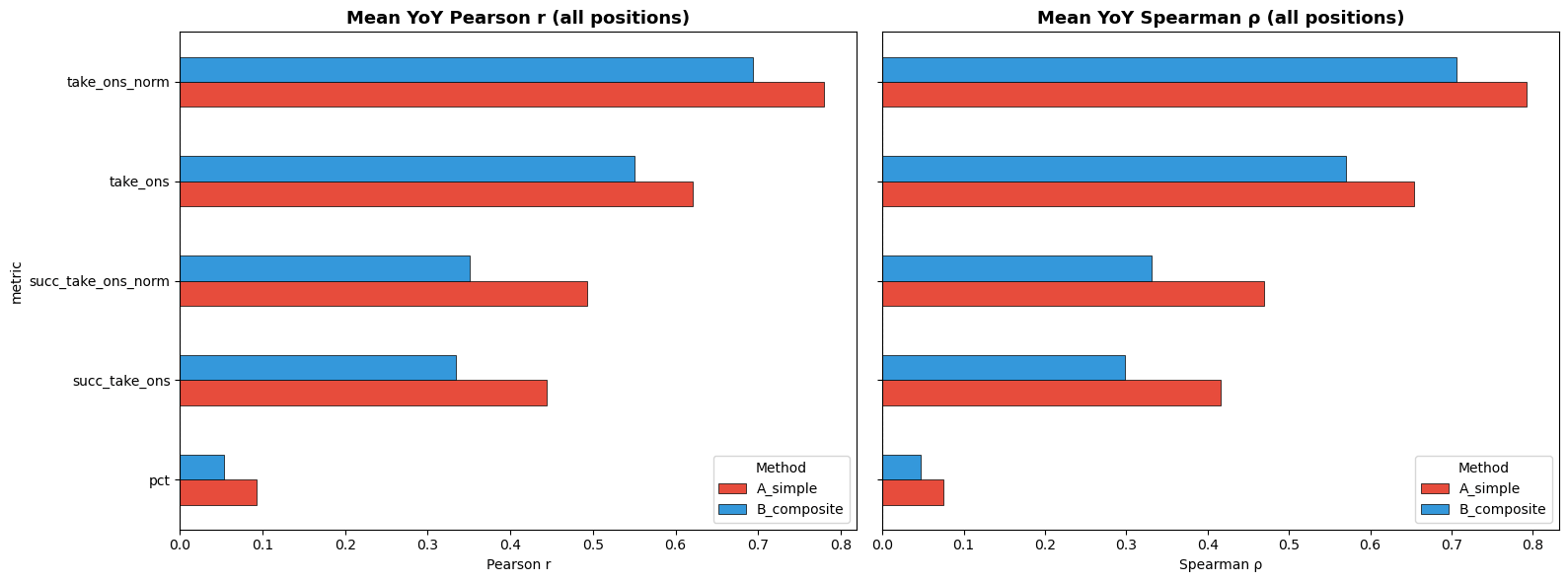

Year-over-year correlation

A metric is useful for scouting only if it tells you something stable about the player rather than the situation. If a player's standstill take-on numbers bounce around randomly from season to season, the metric is capturing noise. But if a player who ranks highly one season tends to rank highly the next, then you're measuring something intrinsic — a genuine player-level skill.

The standard test for this is year-over-year correlation. You take every player who appears in two consecutive seasons, plot their metric in Season N against Season N+1, and compute the correlation. Higher correlation means more repeatability, which means more predictive value.

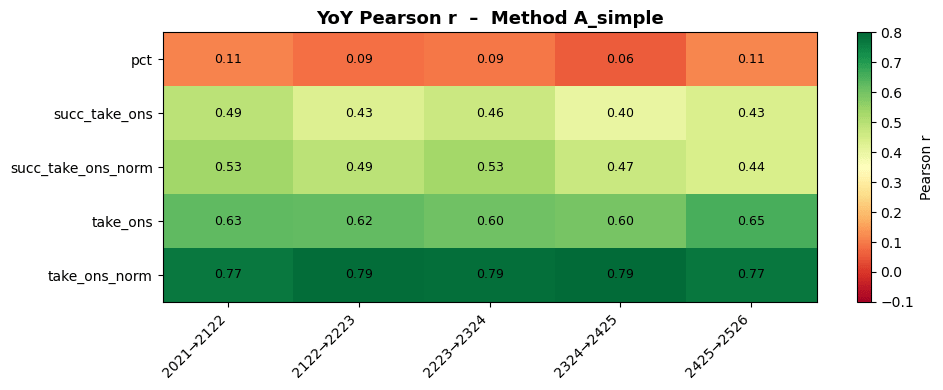

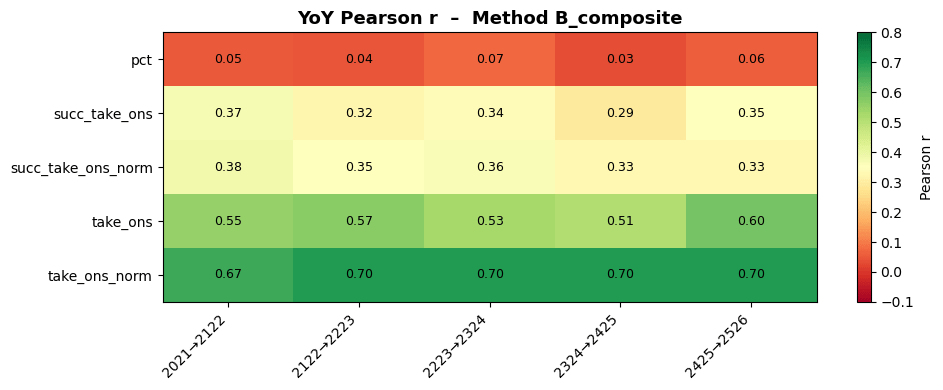

I ran this across five consecutive season pairs — from 2020/21 → 2021/22 all the way to 2024/25 → 2025/26 — covering thousands of players across multiple leagues.

The results

The simple method won. Not marginally — clearly.

For the key metric (standstill take-ons per ~game, normalised for playing time), the simple approach averaged a Pearson correlation of 0.78 across all five season pairs. The complex approach managed 0.69. That's a substantial gap.

What's more, the simple method's 0.78 was remarkably stable: it ranged from 0.77 to 0.79 depending on the season pair. There was essentially no variance — the metric behaved the same way regardless of which two seasons you compared.

The pattern held across every metric I tested. Raw counts, normalised rates, successful take-ons — the simple method won them all. The only metric where both performed equally was success rate, and that's because both were essentially at zero (0.09 and 0.05).

Why simpler wins here

This is counterintuitive but actually makes sense once you think about it. The complex approach introduces several layers of rolling averages, weighted scores, and contextual adjustments. Each layer adds information — but it also adds variance. A borderline take-on might get classified as "standstill" in one season but not another, depending on what happened two or three actions earlier in that specific possession. The method is more sensitive to context, which means it's also more sensitive to noise.

The simple method, by contrast, asks a very blunt question: was the player basically standing still, yes or no? That bluntness turns out to be a feature. It captures the same core population of standstill take-ons with less random fluctuation at the edges.

What I'll use going forward

Based on this analysis, the simple threshold approach is what I'll be using in my work. We're still talking about proxies at the end of the day — the simpler one requires fewer calculations, less time, and produces more stable results. It's unfortunate to somewhat waste time, but it was certainly fun to try and improve my proxy, plus there are parts of this work that could be kept to refine it in the future.